Key Takeaways

- OVIE uses a single image for training, breaking from traditional multi-view methods.

- The model leverages a monocular depth estimator to create 3D representations.

- OVIE is significantly faster and more efficient, outperforming previous methods.

Quick Summary

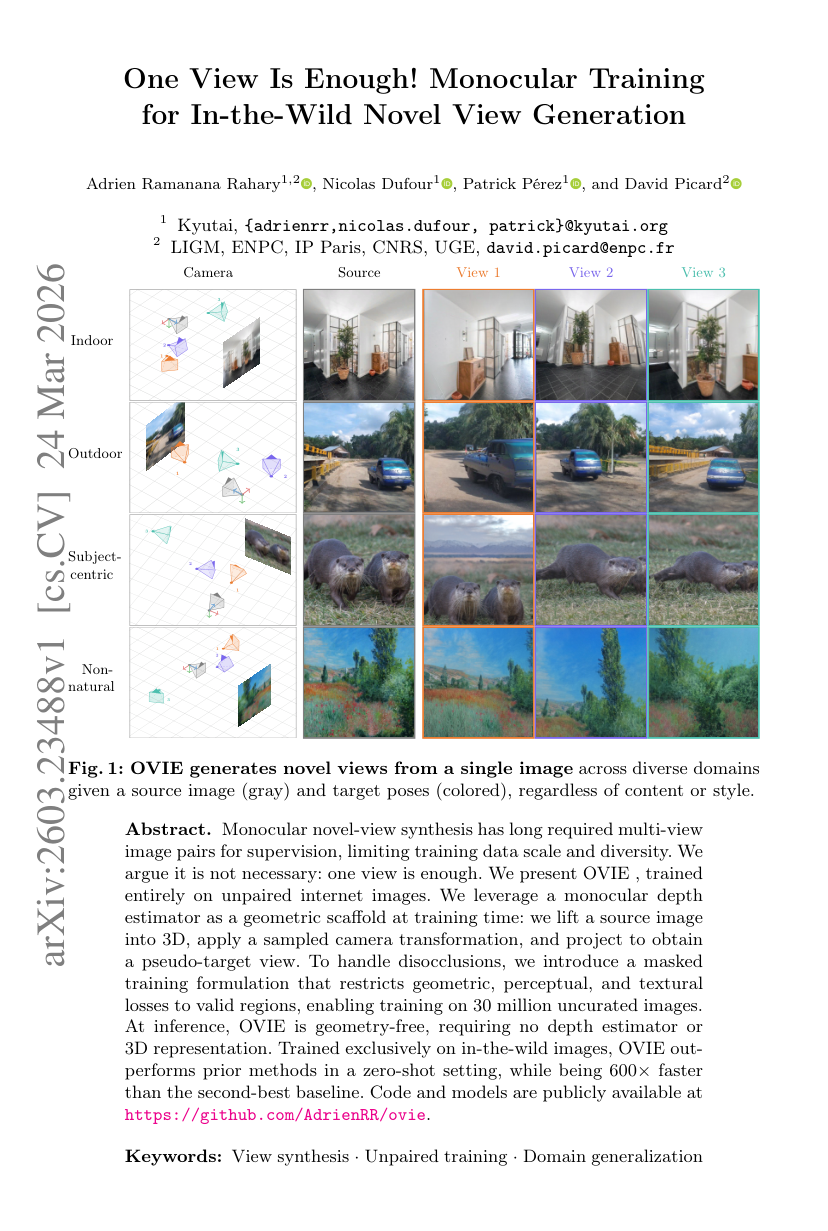

Monocular novel-view synthesis, a technique that generates new views of a scene from a single image, has historically relied on pairs of images from multiple angles. This dependency has limited the scale and diversity of training data, making it challenging to develop robust models. However, recent research introduces OVIE, a novel approach that demonstrates that training on just one image is sufficient.

OVIE, which stands for One View Image Encoder, is trained entirely on unpaired images sourced from the internet. This innovation opens up the possibility of utilizing vast amounts of uncurated data, which traditional methods could not effectively harness. At its core, OVIE employs a monocular depth estimator—a tool that estimates depth from a single image—to create a 3D representation of the source image. This 3D model is then manipulated through sampled camera transformations to generate what is termed a “pseudo-target view.”

To address the challenge of disocclusions—areas in the image that become visible when the viewpoint changes—OVIE introduces a masked training formulation. This method restricts the model’s focus to valid regions of the image, allowing it to optimize geometric, perceptual, and textural losses effectively. Consequently, OVIE can be trained on an impressive dataset of 30 million uncurated images, vastly expanding the potential for diverse training scenarios.

The implications of OVIE are significant. At inference time, the model operates without needing a depth estimator or a 3D representation, making it geometry-free. This simplicity not only enhances its usability but also speeds up the process. In fact, OVIE is reported to be 600 times faster than the second-best baseline method while outperforming prior techniques in zero-shot settings—meaning it can generate new views without needing additional training on specific scenes.

This breakthrough in monocular novel-view synthesis could reshape how we approach image generation and manipulation, making it more accessible and efficient for various applications, from virtual reality to gaming and beyond.

Disclaimer: I am not the author of this great research! Please refer to the original publication here: https://arxiv.org/pdf/2603.23488v1