Key Takeaways

- The demand for efficient fine-tuning methods for large AI models is increasing, leading to the development of techniques like Low-Rank Adaptation (LoRA).

- A new method, Cross-Model Low-Rank Adaptation (LoRA-X), allows the transfer of fine-tuning parameters between different AI models without needing original training data.

- This innovation addresses challenges related to data accessibility and privacy, making the fine-tuning process more practical and efficient.

Quick Summary

As artificial intelligence continues to evolve, the need for efficient fine-tuning methods for large foundation models has surged. Traditional fine-tuning methods often require access to the original training data, which can be problematic due to privacy concerns or licensing restrictions. This has led to the development of techniques like Low-Rank Adaptation (LoRA), which fine-tune models while only adding a few parameters specific to the task at hand. However, when these base models are updated or replaced, the associated LoRA modules must also be retrained, creating a significant hurdle if the original data is unavailable.

To tackle this issue, researchers have introduced a novel approach called Cross-Model Low-Rank Adaptation (LoRA-X). This method enables the transfer of LoRA parameters from one model to another without the need for the original training data or synthetic data that closely resembles it. The innovation lies in the adapter’s ability to operate within a specific subspace of the original model, which is necessary because the new model’s characteristics are not fully known. By focusing on layers that show a reasonable level of similarity between the source and target models, LoRA-X facilitates the effective transfer of parameters.

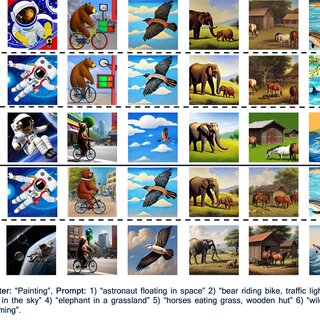

Extensive experiments conducted using this new method have shown promising results, particularly in applications such as text-to-image generation with models like Stable Diffusion v1.5 and Stable Diffusion XL. The success of LoRA-X not only simplifies the fine-tuning process but also makes it more accessible, as it circumvents the limitations posed by data privacy and availability. This advancement has significant implications for the future of AI model development, potentially leading to more agile and adaptable systems that can evolve without being hindered by data constraints.

Disclaimer: I am not the author of this great research! Please refer to the original publication here: PDF Link