Key Takeaways

- This study compares the performance of two machine translation systems, DeepL and Supertext, focusing on their ability to translate unsegmented texts.

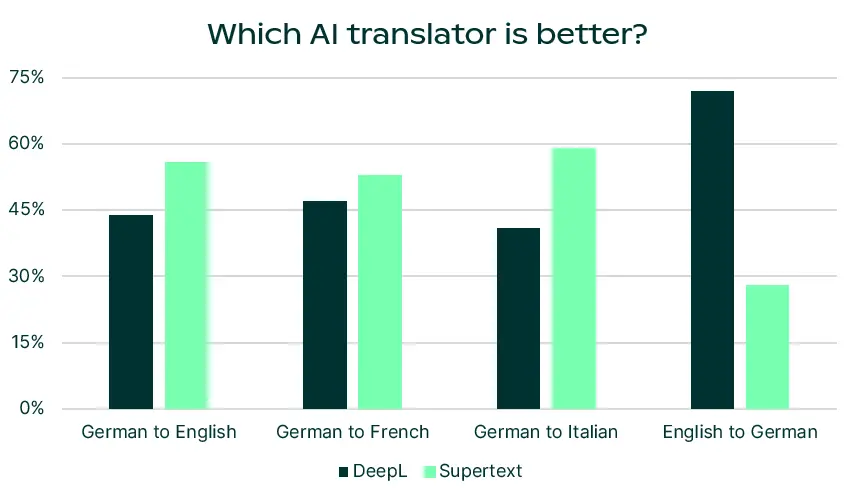

- While both systems performed similarly in segment-level assessments, Supertext showed superior consistency in document-level evaluations across three out of four language directions.

- The research emphasizes the need for context-sensitive evaluation methods to better reflect the usability of machine translation systems in real-world scenarios.

Quick Summary

As machine translation (MT) technology continues to advance, particularly with the integration of large language models (LLMs), there is an increasing need for effective methods to evaluate their performance. A recent study set out to compare two prominent commercial MT systems, DeepL and Supertext, by examining their translation quality on unsegmented texts—meaning entire documents rather than smaller segments. This approach is critical because it mimics real-world scenarios where translations are often needed for complete documents rather than isolated sentences.

Professional translators were engaged to assess the translation quality of both systems across four different language pairs. Initial evaluations, which focused on individual segments of text, revealed no significant preference for either system. However, when the analysis shifted to the document level, a clear trend emerged: Supertext outperformed DeepL in three out of the four language directions tested. This suggests that Supertext is better at maintaining consistency and coherence across longer texts, which is essential for ensuring that translations are not only accurate but also contextually appropriate.

The implications of this research are significant. It highlights the limitations of traditional segment-level evaluations, which may fail to capture the complexities of translating longer texts. By advocating for more context-sensitive evaluation methodologies, the study calls for a shift in how machine translation quality is assessed, ensuring that evaluations align more closely with the practical needs of users. This approach could lead to improvements in MT systems that better serve the demands of real-world applications.

For those interested in further exploration, the researchers have made their evaluation data and scripts publicly available, promoting transparency and encouraging additional analysis in the field of machine translation.

Disclaimer: I am not the author of this great research! Please refer to the original publication here: https://arxiv.org/pdf/2502.02577.pdf