Category: Computing

-

SpecEyes: Accelerating Agentic Multimodal LLMs via Speculative Perception and Planning

Discover how the SpecEyes framework accelerates agentic multimodal large language models (MLLMs) by enhancing speed and accuracy in AI applications.

-

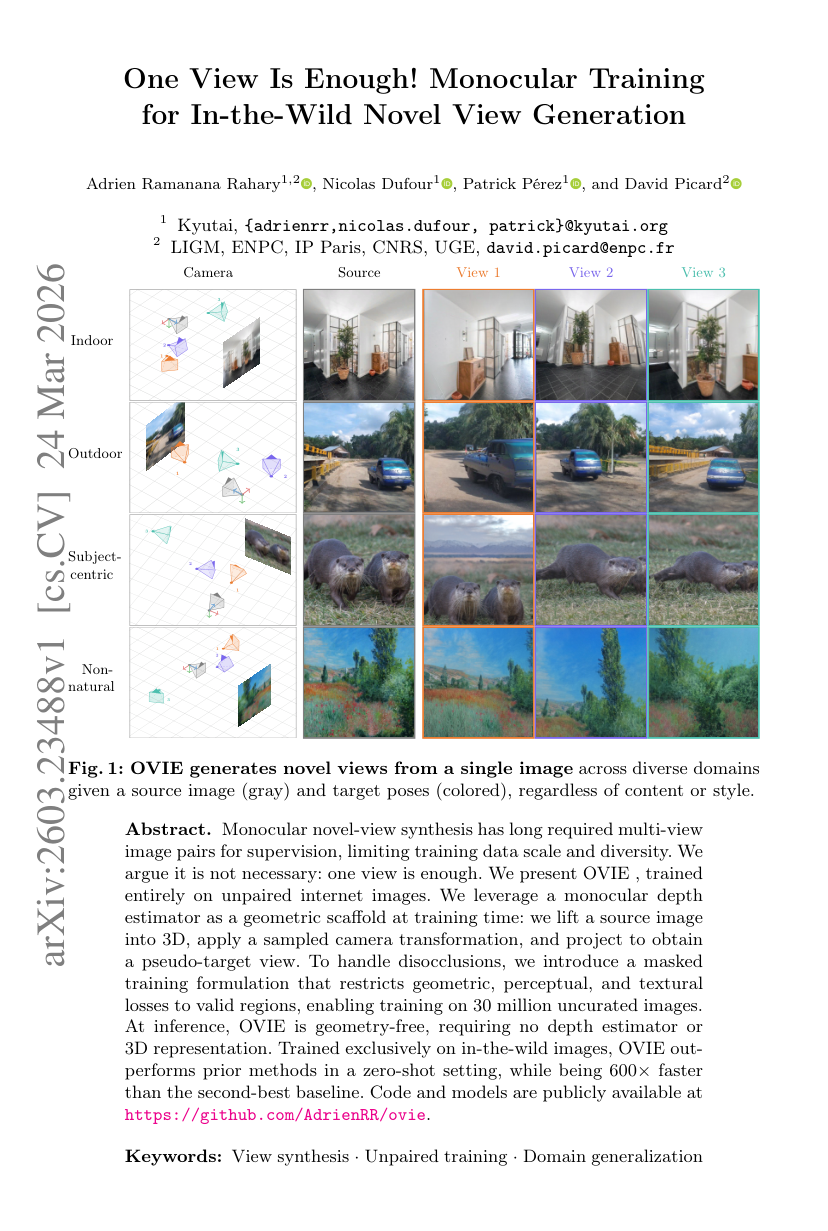

One View Is Enough! Monocular Training for In-the-Wild Novel View Generation

Discover how OVIE transforms monocular novel-view synthesis by training on single images, achieving faster and more efficient results than traditional methods.

-

VISion On Request: Enhanced VLLM efficiency with sparse, dynamically selected, vision-language interactions

Explore how VISOR enhances the efficiency of Large Vision-Language Models, preserving visual information while reducing computational costs for complex tasks.

-

Exploring Novel Data Storage Approaches for Large-Scale Numerical Weather Prediction

Explore the performance of DAOS and Ceph object storage systems for HPC and AI applications, highlighting their advantages over traditional file systems.

-

When to Trust the Cheap Check: Weak and Strong Verification for Reasoning

Explore the balance between weak and strong verification in large language models, enhancing output trustworthiness while managing resources effectively.

-

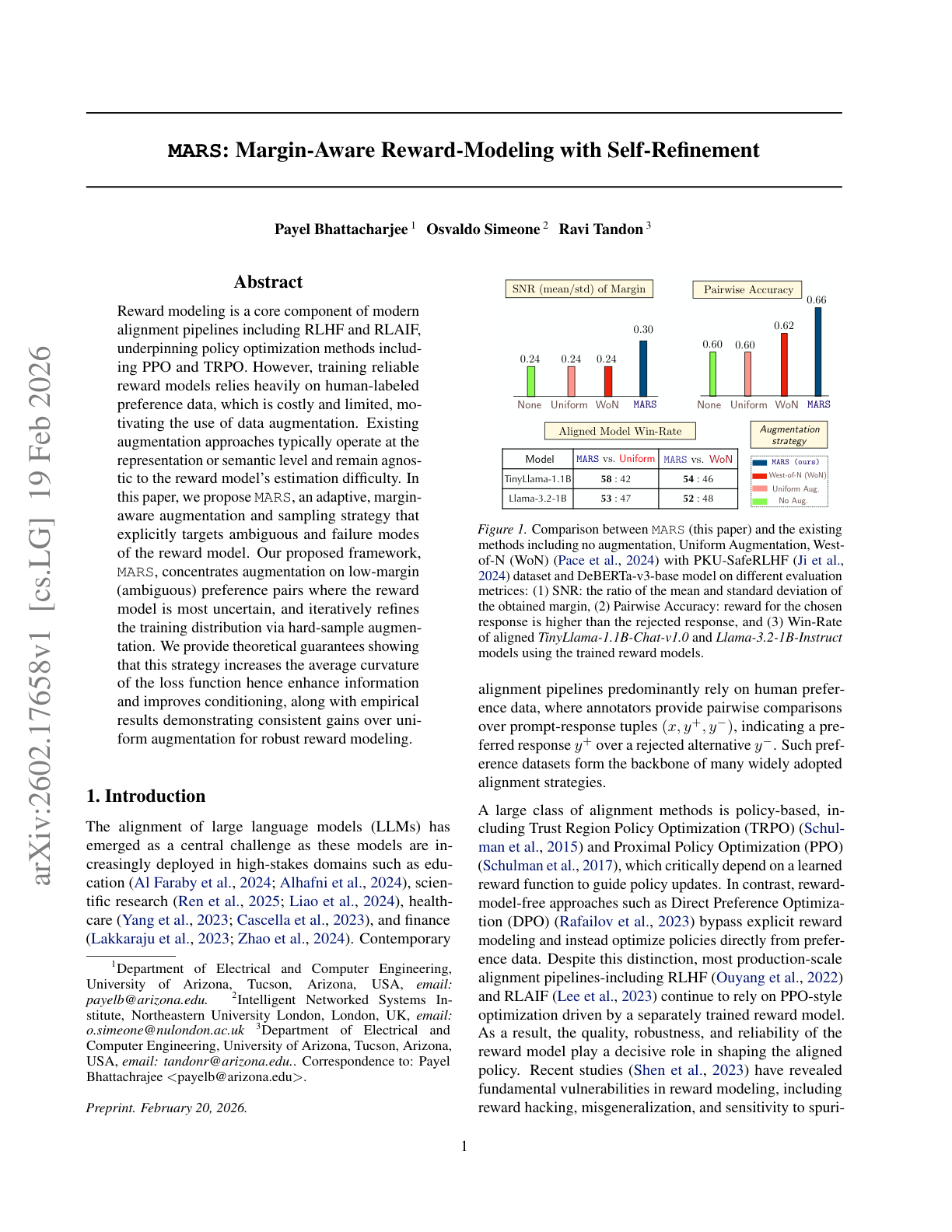

MARS: Margin-Aware Reward-Modeling with Self-Refinement

Discover how MARS enhances reward modeling in AI by targeting ambiguous preference pairs for improved training efficiency. Learn more!

-

Reverso: Efficient Time Series Foundation Models for Zero-shot Forecasting

Discover efficient time series foundation models for zero-shot forecasting, highlighting the Reverso family that balances performance with reduced size.